The Risks of Bulk Content Generation

So what is bulk content generation actually? Just picture this. You need hundreds or even thousands of articles for your website or blog. Instead of sitting there and writing every single one yourself, you use AI to create content in bulk. Basically an automated way to pump out a ton of content really fast. Sounds kinda amazing at first, right? But then you stop and think about it for a second.

The quality of your content is super important for your online success. Good content brings in visitors, gets people to share your stuff, and helps your rankings on search engines go up instead of down.

When you land on a site and see content that’s clearly low effort or just weird and irrelevant AI-made text, how do you feel about that brand? Usually not great. It feels off. That’s exactly why quality matters. It’s not just about filling up pages. It’s about actually giving people something useful and valuable.

A lot of businesses mess this up. They use AI to generate tons of content and don’t really think about how it might actually hurt their website’s performance. Sure, having a lot of content can help you get noticed by Google’s search algorithm. But what if that AI content doesn’t meet Google’s quality standards at all? Then your rankings can drop fast. Like really fast.

Low-quality AI-generated bulk content can cause things like:

- Damage to your brand’s reputation

- Lower search engine rankings

- Your site being removed from search results entirely

- Less visitor engagement

- Higher bounce rates (people leaving after just one page)

So when you’re thinking about AI-driven bulk content creation, remember this. It might look like a quick and easy way to fill up your site, but it’s not always actually helpful in the long run. Sometimes it does more harm than good. Kinda the opposite of what you want.

But still, before you completely give up on using AI for SEO bulk content, keep in mind that not all generated content is the same. With careful management and some smart planning, like mixing human editing with SEO writing tools such as Junia AI, bulk content generation can actually be a useful part of a successful online strategy.

In this article, we’ll go into the risks of large-scale AI content creation and talk about how to deal with these problems so you can keep a strong and effective online presence.

Writesonic and Others: Real Help or Just Another Gimmick?

So I’ve tried using AI tools like Writesonic and Copy AI to pump out a bunch of blog posts at once. They use GPT3 or GPT3.5 tech and all that. But like, is this actually a smart way to build a real blog, or just a shortcut that looks good on paper? Let’s talk about the main problems for a second.

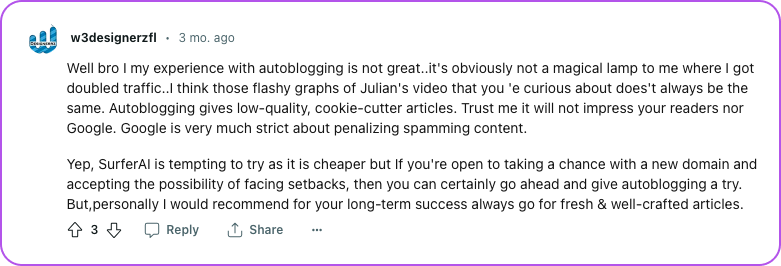

First thing, it’s not even clear if AI-made bulk content can rank well on Google long term. From what I’ve seen, content made with GPT3 or GPT3.5 often fails plagiarism checks and it just obviously feels AI written. It misses that human touch you know? And since Google keeps changing and updating its system all the time, this kind of mass-produced content might get hit later for trying to trick the search engine.

Also, these super fast blogs usually end up with a lot of duplicate content, which is pretty bad for SEO because:

Duplicate content confuses search engines, making it hard for them to pick the best version to show in search results. This can lower rankings for all versions.

On top of that, creating many posts at once can get really expensive pretty quickly. And honestly, if this method worked so amazingly well, wouldn’t the companies selling it just use it themselves to dominate every niche?

You might be wondering about platforms like writesonic, that really push this whole bulk content creation idea. Are they actually delivering what they say or just using clever marketing to sell the dream?

Based on some user reviews, people are kind of split on Writesonic. The content it makes feels similar to what you’d get from ChatGPT: it’s clear, sure, but it often lacks originality and the kind of depth you really need for professional blogging. These tools usually care more about quantity than quality, which hurts SEO, since Google prefers unique and actually valuable content. And yeah, automated articles almost always miss personal stories and real opinions that help readers connect emotionally.

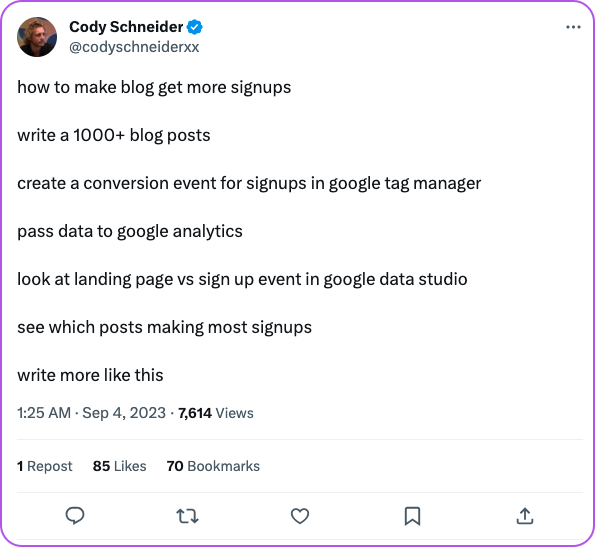

You’ll probably see YouTubers or Twitter users hyping up bulk-generated content a lot. But just keep in mind:

- They Push Mass Content Creation: A lot of them call AI article production a game-changer, but they don’t really mention the serious downsides.

- They Ignore Its Problems: Even though tons of articles might sound impressive, these influencers often skip how it can damage your credibility and SEO rankings in the long run.

Remember - not everything that shines is gold!

So yeah, be careful with advice that tells you to rely on mass AI-generated content. Try to focus on quality just as much as quantity when you plan out your content.

The Downsides of Using AI for Mass Content Generation

AI has totally changed how we can create huge amounts of content really fast. But, just like any other tool, it definitely has some problems too. In this section, we're going to talk about the main disadvantages of using AI to generate content in bulk, and kind of why it’s not always as perfect as it sounds.

Lack of Human Touch and Creativity in Mass-Produced AI Content

One big problem with using only AI to create content is that it doesn’t really have that human touch or real creativity. Sure, AI can pump out a lot of content super fast, but it usually misses the originality, uniqueness, and personal relevance that a real human writer brings in. So you end up with content that feels kind of bland and flat, and it just doesn’t connect that well with your audience.

Think for a second about what actually makes your website special. It’s your authentic voice, your personality, your weird little ideas, your creativity. If you only rely on AI-generated content, you start losing that unique quality that makes your brand stand out in the first place. Your audience might start to feel like your site is generic or kind of impersonal without real human input. To keep the quality high in mass-produced AI content, the human touch is key to making it original, relevant, and interesting.

Quality and Accuracy Problems in AI-Generated Content

One problem with AI-generated content is that it can sometimes have quality and accuracy issues. Even though AI has improved a lot recently, it still kind of struggles to fully understand context, subtle meanings, and more complex subjects. Because of that, it can make mistakes, use wrong facts, or write sentences that just sound weird or not very clear. All this can lower the overall quality of the content and cause confusion or misunderstandings for readers.

Also, because AI creates content based on patterns and existing information, it might accidentally include biased or incorrect details without really “knowing” it. This can hurt your brand's reputation if the content is published without a careful review first. So, checking the accuracy and quality of AI-generated content is really important if you want to keep your audience’s trust and keep high standards in your content.

Not Enough Time to Manually Edit All AI-Generated Content

When you use AI to make a lot of content, it can spit out way more articles than people can actually keep up with. Like, editors just don't have time to go through every single one super carefully. So important stuff like grammar, spelling, and how smoothly everything flows can slip by, and then the overall quality ends up dropping.

AI is great for saving time when writing, but yeah, humans still need to check the content to keep it high quality. A good way to deal with large amounts of content is setting clear editing steps, or kind of combining automation with certain human reviews, so you can keep things under control. If there's no proper editing, even the best AI-written content might not really meet what people expect.

Risk of Plagiarism and Duplicate Content with AI-Generated Articles

Plagiarism and duplicate content are pretty big risks when you use AI to create a lot of content. Since AI learns from stuff that already exists, it can accidentally spit out text that’s super close to what’s already online. Content made by tools like GPT-3 or GPT-3.5 often doesn’t pass plagiarism tests and can be spotted as AI-written pretty easily. So yeah, checking for plagiarism is really important before you publish any AI content.

Search engines punish websites that have duplicate content, and that can really hurt your site’s visibility and ranking. Because of that, it’s super important to make sure any AI-generated content is original and unique before you put it out there.

AI has made creating content faster and easier, which is nice, but it also comes with some downsides. It might not have real human creativity, it can sometimes create lower quality or even inaccurate information, it often needs a lot of manual editing, and there’s always that risk of plagiarism or duplicates. To keep your website’s content authentic and high-quality, you really need to find a good balance between using AI and adding human input.

How Poor Content Hurts SEO and User Experience

In SEO, content is super important. But yeah, not all content is actually good. The quality, relevance, and originality of your content can really affect how well your website ranks in search results, sometimes more than people think.

I want to share a personal story. So I managed this blog that used tons of AI-generated content from a tool like writesonic. At first, our website traffic went up a lot and it felt really good, honestly kind of like we hacked the system. But after a few months, our Google ranking suddenly dropped hard because of SEO penalties.

We later found out that our "unique" articles were basically just rewritten versions of other stuff already online. They were grammatically correct and looked original on the surface, but they didn’t really have real depth or any unique value at all.

This whole thing taught me a pretty big lesson. Just pumping out a lot of low-quality content without caring about the actual value can actually hurt your SEO more than it helps it, maybe even reverse your progress. To avoid such pitfalls, it's crucial to follow SEO best practices, which focus on delivering real value through high-quality content.

Search Engine Penalties from AI Bulk Content Generation

Search engines like Google want to show people the most relevant and actually useful results. So if your site is packed with a lot of low-quality, automatically generated content, it can start causing problems. Google’s systems are pretty good at spotting bad content now, and they really don’t favor it at all.

So what happens then? Well, your website might drop in search rankings, sometimes a lot, or even get removed from search results completely. And honestly, trying to recover from this kind of thing can be really tough and frustrating.

Remember: Google’s algorithms reward websites with high-quality content and lower the ranking of those that offer a poor user experience.

Less Organic Traffic Because Content Isn’t Relevant

When you crank out a bunch of content really fast, there’s a good chance a lot of it won’t actually match what people are searching for. If your website’s content doesn’t really fit what users need from their search, they probably won’t click on your site in the search results. And yeah, that ends up hurting your ranking over time.

And even if some visitors do land on your site, they usually won’t stick around very long if the content doesn’t actually help them or answer their questions. When there’s this kind of mismatch, user engagement drops, and more people just leave your site really quickly. And that kind of leads into the next problem, which is how user engagement and bounce rates are affected.

How User Engagement and Bounce Rates Can Be Affected

User engagement is basically how people interact with your website. Like, are they clicking around to different pages? Are they actually staying on each page for a decent amount of time, or just skimming and leaving right away?

Bounce rate is the percentage of visitors who leave your site after looking at only one page. If your bounce rate is high, it usually means people didn’t find what they were looking for, or they just weren’t happy with the content at all.

So imagine someone lands on your site from a search engine or a link, and they’re expecting some really useful information. But instead, they run into low-quality or totally irrelevant content. What do you think happens? Yeah, they click away pretty fast.

This kind of behavior tells search engines that your site isn’t helpful or relevant enough, and that can cause your rankings to drop. Then it turns into this ongoing cycle that hurts both your SEO and the overall user experience too.

So what does this mean for you? Using AI to pump out a lot of content really fast might sound like a nice shortcut, but it can actually cause more problems than it solves. It’s usually much better to focus on creating real quality content, even if it means having less of it.

Keeping Your Brand's Voice and Tone

There’s just so much content on the internet now, it’s kind of overwhelming. So how does your brand actually stand out in all that noise? What makes it really feel different? A lot of the time, the answer is your brand voice and tone. That’s basically how your brand “sounds” when it talks. It shows your personality and what you value, and it kind of guides how you talk to your audience everywhere. But then, yeah, what happens when you start using AI in all of this?

AI has changed a lot of industries, and content creation is definitely one of them. But it’s not perfect. It also brings a bunch of new problems. One big problem is that AI has a hard time really copying a brand’s unique voice. Like, it can sound pretty smart and all, but it doesn’t really have that human touch, that subtle feeling, the little details that make a brand’s voice feel special and real.

Just imagine you run an outdoor gear company with this chill, adventurous vibe. Super laid-back but exciting. Now imagine the AI starts writing super formal, stiff, corporate-sounding blog posts for your website. That would feel weird, right? Like something is off. It just wouldn’t feel like you at all.

Keeping a consistent tone across all your website content is actually really important. Consistency leads to familiarity. And familiarity slowly builds trust over time. Then that trust is what turns people into loyal customers who keep coming back.

Think about your favorite brands for a second. Whether they’re funny or serious, bold or more classy, their voice usually stays pretty much the same wherever you see them. On social media, emails, ads, all of it. That consistency makes it easier for people to recognize them and connect emotionally with the brand.

But when you use AI to pump out tons of content fast, it can accidentally change the tone and style in ways that break that consistency. You might end up with one blog post that sounds like Shakespeare and then another one that sounds like some cold robot. And honestly, that kind of mix confuses readers and hurts your brand in the long run.

Just remember, realness is what actually connects with people. When content feels genuine, it builds trust and credibility. That’s something random, mass-produced content usually can’t really do, or at least not very well.

So how do we keep our unique brand voice while still using AI to help us?

The goal isn’t to choose one and completely throw the other away. It’s more about using both together in a smart way (we’ll get into this more next). The key is to let AI help you create content, especially to save time, but still have real humans review, edit, and guide it so everything stays consistent and feels authentic.

And always make sure your content, whether it’s fully written by people or heavily helped by machines, stays true to your brand voice and tone.

Ways to Avoid Problems When Creating Large Amounts of Content

Do you ever feel kind of stuck when you’re trying to make a lot of content, but still want it to actually be good? Yeah, it’s pretty normal. Don’t worry about it too much. Anyone can learn how to create great content, it just takes some time and practice. With the right plan in place, you can avoid a bunch of common problems that come up when making lots of content. So anyway, here are some helpful tips:

1. Combining AI with Human Editing and Proofreading

Relying only on AI to create content can lead to a bunch of problems, like boring writing, random mistakes, and sometimes even plagiarism. But what if you could still use AI’s power and keep that real human voice in there too? That’s basically where Junia AI comes in and helps out.

Junia AI gives you a pretty smart solution by mixing artificial intelligence with real human editing and proofreading. So your content stays high-quality, original, and actually interesting to read.

Just picture this for a second. You’re staring at a blank page, no idea how to start or how to say what’s in your head. It’s annoying. Junia AI jumps in and gives you a basic outline based on your keywords. From there, you can tweak it however you like, add your own ideas, change the tone so it fits your brand and your style and all that.

The result? You end up with a solid article that sounds like your brand and really connects with your audience, and you get it done way faster than starting totally from scratch.

2. Using Content Curation and Repurposing

Content curation basically means you’re collecting useful information about a topic from different places and putting it together in a clear way. Kind of like doing the research for your audience. This helps your readers a lot because it saves them time from having to hunt around for all the info by themselves.

Not sure how to repurpose content well? Here are some ideas you can try:

- Turn a blog post into an interesting infographic or use AI text-to-video tools to make a video out of it.

- Pick out key points from long articles and turn them into short social media posts.

- Change customer reviews into case studies that tell a bit more of the story.

- Turn webinars into podcasts or blog posts, or sometimes even both if it fits.

Repurposing helps you reach different parts of your audience who like different types of content. Some people prefer reading long articles, others like watching short videos, and some just want to listen to podcasts while they do something else. So yeah, it kind of lets you show up everywhere without always starting from scratch.

3. Taking Your Time to Create Content

"Slow and steady wins the race."

When you’re making interesting and engaging content, rushing usually just ends up giving you weak and kind of shallow results. It just does. Instead, try using more of a “slow churn” approach. Focus more on quality, not just pumping out a ton of stuff. Here are some simple but important steps for creating content slowly:

- Engagement is Important. When you work at a slower pace, you actually have more time to connect with your followers. Not just replying to questions, but really starting and keeping meaningful conversations that help build a real community. Engagement isn’t only about likes and shares, it’s about forming real connections and slowly gaining loyal followers who stick around.

- Respecting Time. Your audience’s time actually matters a lot. They don’t want to be flooded with a bunch of poor-quality content. When you create fewer but better, well-made pieces, it shows you respect their attention. And it gives them useful information they can actually trust and come back for.

- Better SEO Results Over Time. Content that’s made slowly usually has more detail and more depth than stuff that’s rushed out. That kind of content tends to rank higher in search engines because it offers real value. When you focus on quality, your SEO gets better in the long run, even if it feels slower at first.

Just remember this: taking your time doesn’t mean you’re being lazy. It really doesn’t. It just means you’re pacing yourself so you can make valuable content that actually connects with your audience and gives them helpful information they can use.

To learn how many AI-generated blog posts to publish daily for the best engagement and SEO, you can check out this helpful guide.

Handling the challenges of creating lots of content isn’t about avoiding technology or only sticking to old school methods. It’s more about using things smartly. Like combining tools such as Junia AI with proven stuff like human editing and reusing content in different formats.

Content creation isn’t just about producing a huge amount. It’s really about sharing valuable information in an interesting way that actually connects with your audience. And yes, you can totally do this even when you’re making content in bulk!

Finding Balance in Content Creation

Technology has really changed how we create content now. With AI, we can pump out a ton of content super fast. But there's a catch. Kinda like a coin with two sides, bulk content creation comes with both good stuff and not so good stuff.

Just remember, even though AI can write a lot of articles really quickly, the quality isn’t always the best. That’s why going back and reviewing everything carefully is super important. I mean, don’t you think quality matters more than quantity when you’re making content?

Good content should actually mean something and be helpful to your readers. That’s what makes them come back again. So yeah, creating lots of content can save time and effort, but it’s just as important to make sure it’s real, relevant and feels genuine.

"The key is to balance automation with human creativity."

Exactly. It’s not about only using AI or totally ignoring it. Try thinking of AI like a tool that helps you work better. You can let AI draft content really fast, then you come in and fix it up, add your voice, and improve it.

Just imagine an automated system doing the boring stuff like keyword research or metadata creation for you, while you get to focus on the creative parts. Sounds pretty great, right? With [Junia AI](https://www.junia.ai/blog/write-more-content-in-less-time), this is actually possible.

Remember when we talked about reusing old content? That’s another smart trick to keep quality high while still making more content faster. You can update old posts with fresh info or present them in a new way and it kind of gives them a new life.

So yeah, just keep in mind, bulk content creation has a lot of benefits, but finding the right balance really matters. Try to focus on quality over quantity and honestly, both your readers and search engines are going to appreciate it.